The market for automotive lidar, enabling autonomous vehicles to ‘see’, is expected to reach almost $4 billion by 2026.

Manufacturers have been working on autonomous cars for decades but the prospect (some would say, spectre) of driverless cars has become more likely in the past 10 years. A level-four autonomous car is one that requires no human attention at all, and to even get close to that, it will need at the very least the vision and cognitive powers akin to a human’s.

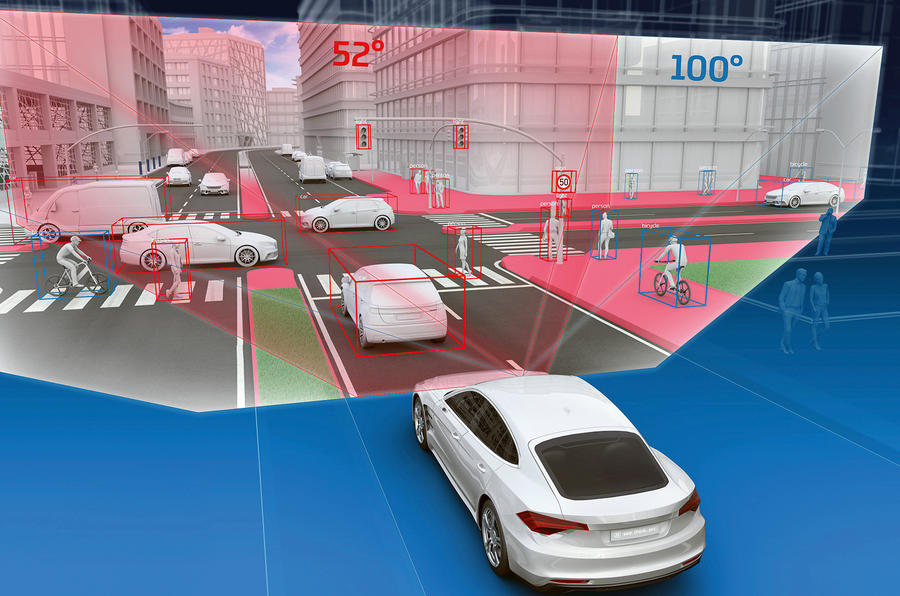

Leaving the cognitive aspect to one side, the seeing bit is still the subject of intensive research but US company Lumotive, founded in 2018, might have the answer. Vehicles need to be able to see a digital representation of what a driver sees to create a high-definition 3D map of their surroundings, including the size and position of all the objects around them. They also need to know how fast any objects are moving and in which direction.

Lidar (light direction and ranging) is still seen as essential to full autonomy. It works by scanning the scene with pulses of laser light, which are reflected back to create a ‘point map’ of the surroundings. Doing so originally involved installing a whopping great revolving scanner on the roof of research cars to send and receive the pulses of light, in the same way that radar (radio detection and ranging) sends and receives radio signals. Lidar is capable of locating objects to within a few centimetres at a distance of several hundred metres.

Further developments led to the miniaturisation of the concept by substituting hefty revolving scanners for laser arrays or MEMS (micro electro-mechanical system) mirror lidar. The MEMS systems involve tiny revolving mirrors to reflectively ‘steer’ the laser beam around a scene.

In contrast, the Lumotive Meta-Lidar Platform is based on Light Control Metasurface (LCM) solid-state, beam-steering chips to enable the deflection, or ‘steering’, of a laser source without the need for any moving parts. A metasurface is one that can change its condition under electronic control.

In this case, the reflecting surface is liquid-crystal-based and can be made from conventional materials using well-established manufacturing techniques. The surface is software controlled and can be configured for different applications instead of making mechanical changes to the chip. A second LCM receives the reflected laser pulse, which is processed to build up a high-definition picture (as a point cloud) of the scene. The Meta-Lidar is scalable, so it can be made for both short- and long-range use.

Earlier this year, Lumotive and automotive lighting specialist ZKW showed a fully functional headlight unit incorporating a Meta-Lidar Platform M30 module to illustrate just how well it can be integrated into vehicles. The high-res M30 is the size of a golf ball and has a 20-metre range and a wide field of view. It’s the first in a series of modules that will have a range of up to 200 metres and can identify forms as small as 1cm3 for integration into phones and other devices.

Jesse Crosse